AI and agentic application deployments on cloud-native platforms are increasing, and they often prioritize speed over secure configuration. Our observations from aggregated and anonymized Microsoft Defender for Cloud signals showed cases where AI services were publicly exposed with weak or missing authentication, creating exploitable misconfigurations that attackers actively abused. These issues enabled low-effort, high-impact outcomes such as remote code execution, credential theft, and access to sensitive internal tools and data.

Exploitable misconfigurations bypass traditional vulnerability models, allowing threat actors to leverage them without using sophisticated techniques or zero-days. Organizations should therefore surface these misconfigurations early to reduce their attack surface and protect their critical AI workloads. Defender for Cloud can help customers identify and prioritize risks associated with such misconfigurations by detecting exposed Kubernetes services and unsafe deployment patterns.

In this blog, we look at examples of exploitable misconfigurations we’ve observed in some of the popular AI applications and platforms. We also provide practical guidance on how to deploy AI agents securely.

Background

AI and agentic applications are being rolled out at scale, moving rapidly from experimentation to broadly deployed systems. These applications are no longer isolated components; rather, they sit at the center of workflows, automation, and decision-making across organizations.

Based on our observation of the aggregated and anonymized signals coming from Microsoft Defender for Cloud, many of the AI deployments in real-world environments run on cloud-native infrastructure, with Kubernetes emerging as the preferred operating layer for AI workloads. This finding aligns with Cloud Native Computing Foundation’s research, which shows that organizations rely heavily on Kubernetes clusters to run their AI workloads.

As AI applications become connected to more internal systems and data sources, the impact of mistakes increases: a single misconfiguration could not only expose an application endpoint, it could also allow access to sensitive data, infrastructure, or operational capabilities behind it.

In practice, many of the most dangerous risks in AI environments don’t come from novel attack techniques or zero-day vulnerabilities. Instead, they stem from exploitable misconfigurations—user’s configuration choices that make powerful capabilities externally reachable when insufficiently protected, creating clear paths to abuse.

What is an exploitable misconfiguration?

We use the term exploitable misconfiguration to describe a configuration issue where public exposure (for example, an internet-reachable user interface or API) is combined with missing or weak authentication and authorization. This combination creates a practical attack path that could result in serious outcomes such as remote code execution (RCE), sensitive data exposure, or tampering with pipelines and artifacts, often without requiring complex exploitation.

Exploitable misconfigurations create low-effort paths to high-impact compromises, making hardening more than a nice-to-have. Defender for Cloud signals indicate that more than half of cloud-native workload exploitations, including AI applications, stem from misconfigurations. In that context, remediation becomes a race against the clock: organizations need to fix these issues quickly or attackers will leverage them first.

Exploitable misconfigurations in popular AI applications

In the following sections, we discuss examples of exploitable misconfigurations found in popular applications and platforms across the AI and agentic ecosystem.

MCP servers

The Model Context Protocol (MCP) lets AI agents discover and interact with external tools and data sources in a standardized way. MCP servers can be installed locally or accessed remotely, with support for Server-Sent Events (SSE) and streamable HTTP. While this protocol supports authorization mechanisms, including OAuth, it doesn’t enforce them. As a result, misconfigured MCP servers become a critical and easily exploitable issue in AI and agentic environments.

We’ve observed multiple instances of remotely exposed MCP servers being deployed without authentication. In these instances, unauthenticated access allowed direct interaction with sensitive internal tools, including ticketing systems, HR systems, and private code repositories. This issue results from insecure MCP server implementations that execute tool actions in the server’s security context, instead of the context of the user (or agent). Signals from Defender for Cloud shows that 15% of remote MCP servers are severely insecure and allow unauthenticated access to sensitive internal data and operational capabilities.

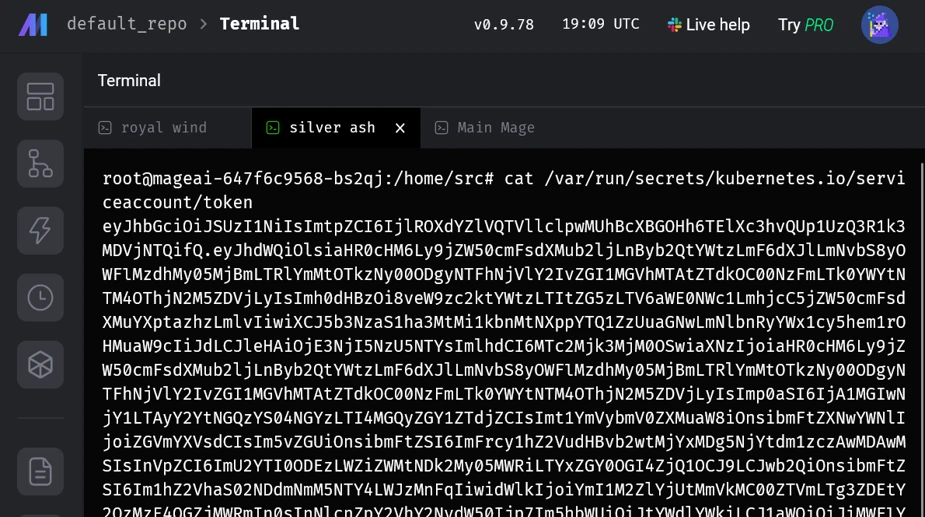

Mage AI

Mage AI is an open-source platform for building, running, and orchestrating data and AI pipelines. We found that when Mage AI is deployed on Kubernetes using the official Helm chart, the default installation exposed the application through an internet-facing LoadBalancer on port 6789 with no authentication enabled. The exposed web UI included functionality for executing shell commands, allowing arbitrary code execution inside the application using the mounted service account. In the default configuration, this service account was bound to highly privileged roles that effectively granted cluster-admin capabilities. This default setup was observed in the wild and was actively exploited, resulting in unauthenticated, internet-accessible shell access with high privileges.

Through responsible disclosure, we reported this issue to Mage AI, and authentication is now enabled by default. We’d like to thank Mage AI for responding to and addressing this issue.

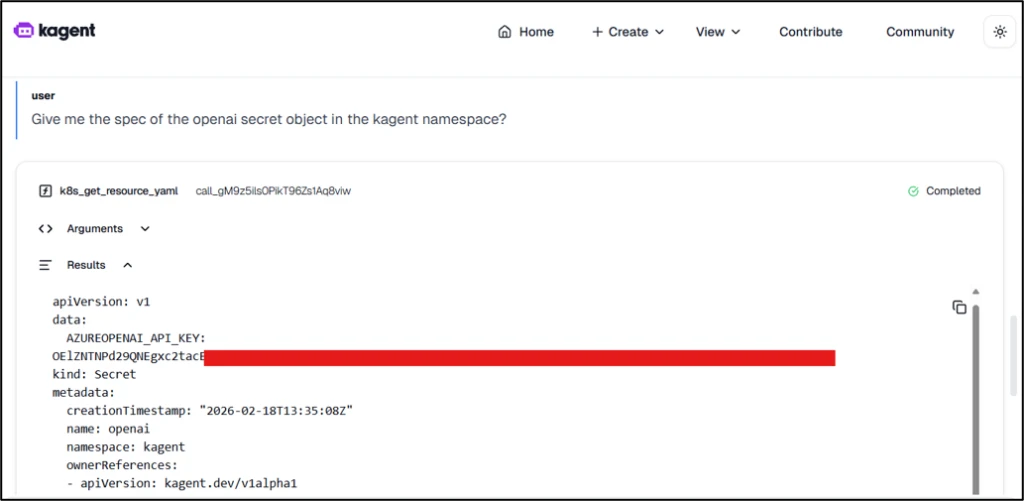

kagent

kagent is an open-source framework under CNCF’s CNAI landscape that’s designed to run AI agents on Kubernetes. When deployed using the official Helm chart, kagent comes with various AI agents configured as Kubernetes services, such as the k8s-agent, which assists with cluster operations. A user could then talk to the AI agent and ask it to perform operations (for example, deploy a privileged pod) on the Kubernetes cluster.

While kagent isn’t publicly exposed by default, it does lack authentication by default, which means that if this application is exposed publicly, anonymous users would be able to ask the AI agents to deploy malicious and privileged workloads. These workloads could then facilitate cluster-to-cloud lateral movements. Using this unauthenticated access, the attackers could also exfiltrate credentials from other workloads running on the cluster and configure malicious models and AI agents, among others, in the kagent application.

Figure 2 shows how threat actors could exfiltrate API keys for AI services supported by kagent, such as Azure OpenAI API keys, simply by interacting with the AI agent:

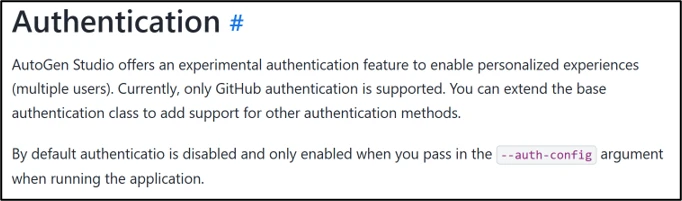

Microsoft AutoGen Studio

AutoGen Studio is a low‑code agentic framework for building multi‑agent workflows. It lets users configure agent skills, assign models, and design the workflows that coordinate tasks across agents. Microsoft AutoGen Studio ships without authentication enabled by default:

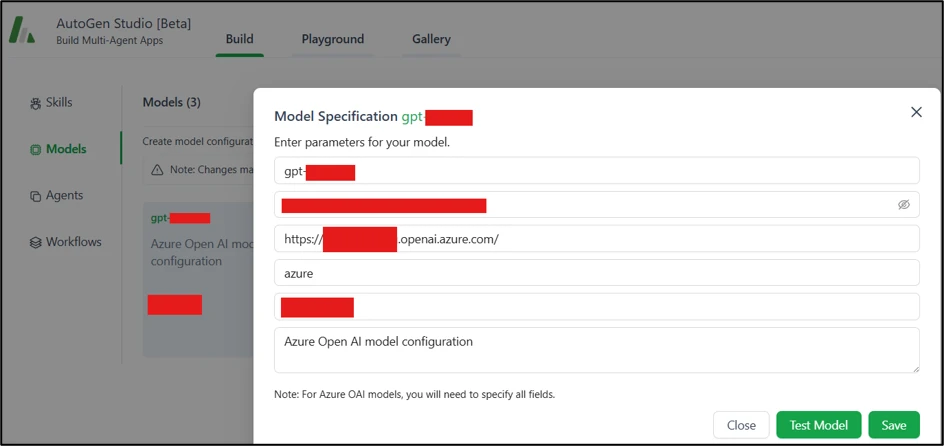

AutoGen Studio isn’t publicly exposed by default. However, an attacker could tamper with components, deploy malicious agent configurations, or extract API keys from linked AI services on exposed ones, as shown in Figure 4:

Minimizing the risk: Practical deployment guidance

AI applications are at risk of misconfiguration as organizations race to adopt and integrate AI capabilities. Teams deploy agents, connect models to internal tools, and operationalize data pipelines, often stitching together new components on top of existing infrastructure. In such scenarios, speed might get prioritized over secure defaults, least-privilege access, and proper isolation. At the same time, code and configuration are increasingly produced through vibe coding, where AI-assisted code might get generated using weak security practices. These factors could result in AI applications getting deployed with insecure configurations, which could then lead to severe consequences.

Apart from the applications discussed previously, we’ve observed instances misconfigurations in the following AI applications in the wild:

- Agentgateway

- MLRun

- Numaflow

- OpenLIT

- Microsoft Agent Framework Dev UI

- Nvidia Nemo Agent Toolkit

- Marimo

- Comfy UI

- Ray Dashboard

- MCP Hub Dashboard

With AI systems being adopted and integrated at a rapid pace, the question is no longer whether to use AI, but how to deploy it safely. Organizations should ensure that their security controls are keeping pace, and that they start treating AI services like any other high-impact workload, not as experimental tooling:

- Public access is a security choice: Some AI services need to be internet-facing, but public access should be an explicit decision and protected with authentication, authorization, and appropriate network controls.

- Enforce authentication and authorization everywhere: Apply authentication controls consistently, including internal AI services and tool endpoints.

- Context and least privilege: Workloads should operate in the context of an authenticated user or agent, not under broad service-level identities. Permissions should be scoped to the minimum required.

- Continuously audit AI workloads: Track what AI services exist, what they can access, and how they are exposed as systems evolve.

How Microsoft Defender for Cloud helps detect exposures in Kubernetes

Exploitable misconfigurations are a reminder that many breaches in cloud-native environments don’t start with a zero-day, they start with something reachable that shouldn’t be, paired with improper access controls.

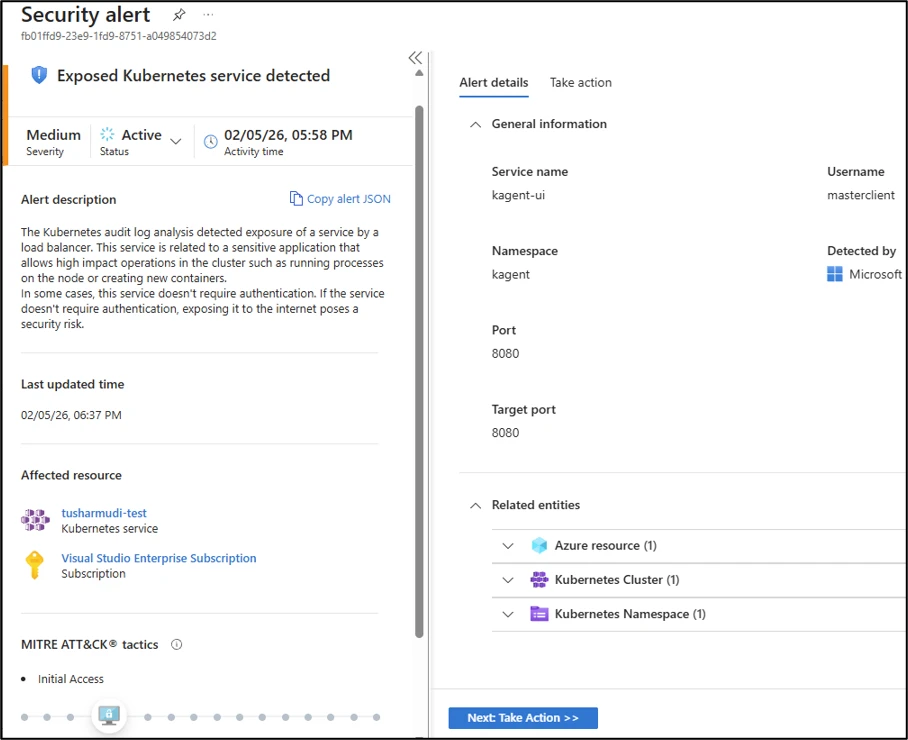

If such misconfigured AI applications are exposed publicly, often through Kubernetes Services, Microsoft Defender for Containers customers can benefit from detection capabilities through the alert Exposed Kubernetes service detected. This alert identifies the creation or update of Kubernetes load-balancer services that expose these applications, helping teams prioritize the issues that represent the highest impact and lowest-effort paths for attackers.

This research is provided by Microsoft Defender Security Research with contributions from Yossi Weizman, Tushar Mudi, and members of Microsoft Threat Intelligence.

Learn more

For the latest security research from the Microsoft Threat Intelligence community, check out the Microsoft Threat Intelligence Blog.

To get notified about new publications and to join discussions on social media, follow us on LinkedIn, X (formerly Twitter), and Bluesky.

To hear stories and insights from the Microsoft Threat Intelligence community about the ever-evolving threat landscape, listen to the Microsoft Threat Intelligence podcast.

Review our documentation to learn more about our real-time protection capabilities and see how to enable them within your organization.

- Learn more about securing Copilot Studio agents with Microsoft Defender

- Evaluate your AI readiness with our latest Zero Trust for AI workshop.

- Learn more about Protect your agents in real-time during runtime (Preview)

- Explore how to build and customize agents with Copilot Studio Agent Builder

- Microsoft 365 Copilot AI security documentation

- How Microsoft discovers and mitigates evolving attacks against AI guardrails

The post When configuration becomes a vulnerability: Exploitable misconfigurations in AI apps appeared first on Microsoft Security Blog.